Customer support is one of the clearest places where AI agents are moving from demos into real operations. In 2026, the question is no longer “Can AI answer simple FAQs?” The real question is: can an AI support agent understand a customer’s issue, retrieve the right policy, check the account record, perform a safe action, and escalate to a human with enough context when the case is too complex?

The answer is increasingly yes — but only for companies that treat AI agents as support infrastructure, not as a chatbot widget. Zendesk positions AI agents as autonomous AI-powered bots that can work across social, web, mobile, voice, and email channels and support many languages. Zendesk AI agents Salesforce describes AI customer service agents as systems that can understand and respond to customer inquiries within the guardrails provided, including both simple questions and more complex issues such as returns. Salesforce AI customer service agents

This guide explains the state of AI agents for customer support in 2026: what they can do, where they fail, how they should hand off to humans, which metrics matter, and how SaaS companies should architect a reliable support-agent system.

The 2026 Shift: From Chatbots to Action-Oriented Support Agents

Traditional support chatbots were mostly scripted decision trees. They worked when the customer’s issue matched a predefined path, but failed when the user asked something unexpected. AI agents are different because they can combine language understanding, retrieval, tool use, and workflow execution.

OpenAI’s Agents SDK documentation describes agents as applications that can plan, call tools, collaborate across specialists, and keep enough state to complete multi-step work. OpenAI Agents SDK guide For customer support, that means an agent can do more than write a response. It can inspect the customer’s subscription, check an order, search policy documents, create a ticket, summarize prior conversation, and pass the case to a human.

The market pressure is real. Gartner reported in February 2026 that 91% of service and support leaders surveyed were under executive pressure to implement AI, and that more than 80% of organizations planned to expand human agent responsibilities as AI reshapes frontline roles. Gartner service AI survey

What AI Support Agents Can Handle Well

AI support agents are strongest when the workflow has clear information, repeatable actions, and good escalation rules. In 2026, the best use cases include:

- FAQ and policy answers: return policy, cancellation rules, password reset, account setup, billing basics.

- Order and account lookup: check order status, subscription status, delivery details, or plan limits.

- Ticket triage: classify issue type, urgency, product area, sentiment, and escalation path.

- Knowledge-base retrieval: answer from help docs, internal policies, product documentation, and troubleshooting guides.

- Guided troubleshooting: collect symptoms, ask clarifying questions, suggest steps, and detect failure cases.

- Agent assist: draft replies, summarize conversations, suggest macros, and surface relevant articles for human agents.

- Safe self-service actions: create support tickets, update contact info, resend invoices, generate return labels, or route cases.

Salesforce lists benefits of AI in service such as productivity, improved response times, cost reduction through automation, personalized customer experiences, and better insights. Salesforce AI for customer service Those benefits are achievable when the agent is connected to the right knowledge and business systems.

Where Human Agents Still Matter

AI agents are powerful, but customer support is not only information retrieval. Support often involves judgment, empathy, exception handling, and trust. Humans still matter when the customer is angry, the policy is ambiguous, the issue involves money, the product is broken, or the support answer affects safety, legal risk, or reputation.

Gartner’s customer service guidance emphasizes that future service agents will need emotional intelligence, technical proficiency, and adaptability to AI tools and processes. Gartner future of customer service agents That is the realistic future: AI handles more repetitive work, while humans handle higher-value judgment, escalation, and relationship repair.

A mature AI support strategy does not try to hide humans. It gives customers fast self-service when the issue is clear and a smooth human handoff when the issue is not.

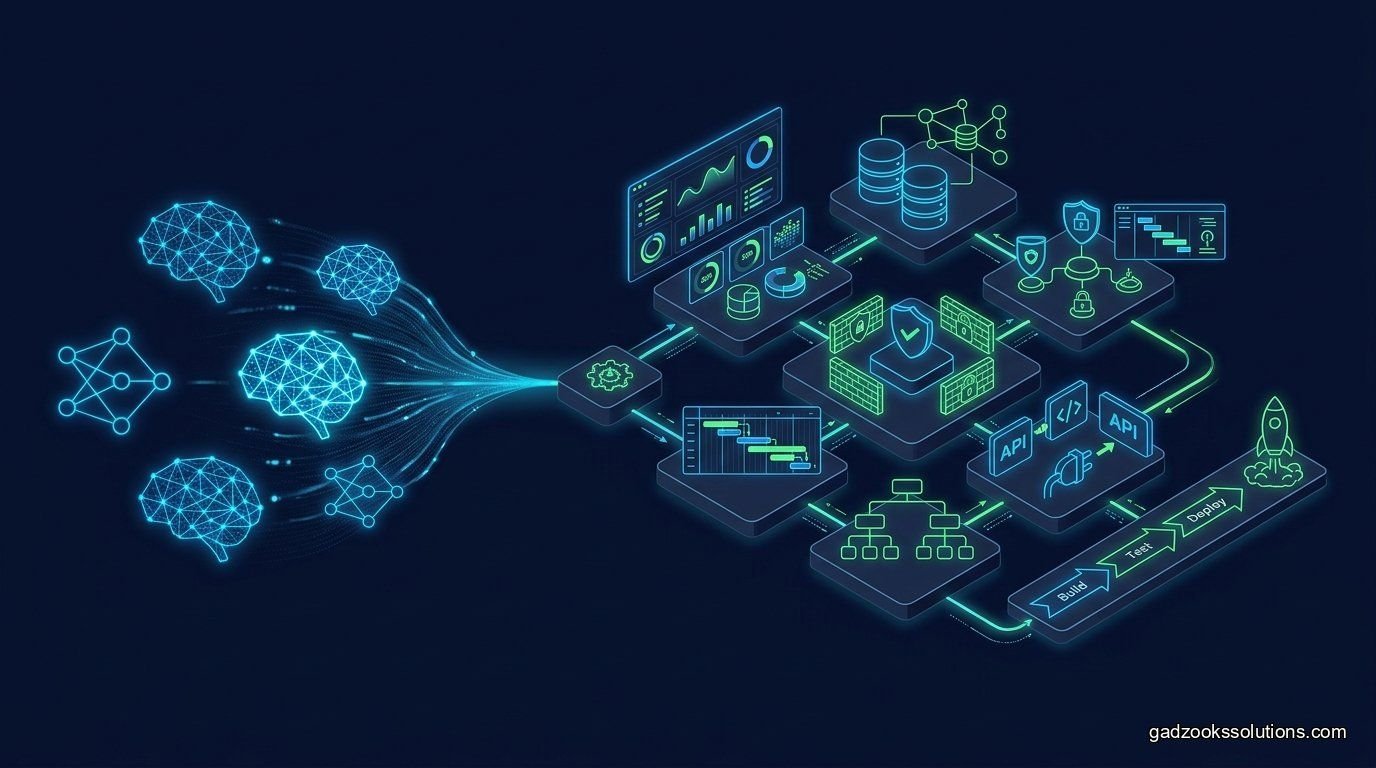

A Practical AI Support Agent Architecture

A production support agent needs more than a model call. It needs a complete architecture:

- Conversation layer: chat, email, voice, social, web, mobile, or in-app support.

- Identity layer: authenticated user, account ID, workspace, plan, language, and permissions.

- Knowledge layer: help center articles, internal runbooks, policy documents, product docs, and troubleshooting guides.

- Tool layer: CRM lookup, order lookup, billing system, ticket system, refunds, account updates, and notifications.

- Agent reasoning layer: intent detection, retrieval, tool choice, answer drafting, and escalation decision.

- Guardrail layer: permission checks, policy rules, sensitive data handling, and human approval for risky actions.

- Observability layer: logs, traces, resolution outcomes, escalation reasons, cost, latency, and quality feedback.

The difference between a weak AI chatbot and a useful AI support agent is that the useful agent knows what it is allowed to do, where to get information, when to stop, and when to bring in a human.

Knowledge Base Quality Is the Hidden Bottleneck

Most AI support failures are not purely model failures. They are knowledge failures. The answer may be missing, outdated, duplicated, contradictory, or written for internal teams instead of customers. If your help center is outdated, your AI agent will repeat outdated information faster.

Before launching an AI support agent, audit your knowledge base:

- Remove outdated policies and duplicate articles.

- Add dates, owners, regions, product versions, and visibility rules.

- Separate public customer articles from internal-only runbooks.

- Write troubleshooting guides in step-by-step format.

- Add escalation rules for refunds, legal issues, security issues, and angry customers.

- Use analytics to identify top unresolved topics and missing answers.

Zendesk’s 2026 CX Trends report page highlights contextual intelligence as a major theme shaping customer experience. Zendesk CX Trends 2026 Contextual intelligence depends on clean, accessible, permission-aware knowledge.

Human Handoff: The Most Important Support-Agent Feature

A support AI agent should not be judged only by how often it avoids human involvement. It should also be judged by whether it escalates correctly. Bad automation traps customers in loops. Good automation resolves simple issues and makes complex escalations faster.

A strong handoff should include:

- Customer identity and account details.

- Conversation summary.

- Detected intent and sentiment.

- Steps already attempted.

- Relevant help articles or policies.

- Tool results such as order status or subscription status.

- Reason for escalation.

- Suggested next action for the human agent.

OpenAI’s own help center describes a flow where users first interact with a virtual assistant, and a human agent can join if the bot cannot resolve the issue. OpenAI support flow That pattern is becoming standard: AI first, human when needed, context preserved throughout.

Metrics That Actually Matter

Do not evaluate AI support agents only by deflection rate. A high deflection rate can be bad if customers leave frustrated or cases are silently unresolved. Intercom defines its Fin AI Agent automation rate as the portion of total support conversations fully resolved by the AI agent without a human teammate needing to step in. Intercom automation rate definition

For production support teams, track a balanced scorecard:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Automation rate | Share of conversations resolved without human help. | Shows scale, but must be paired with quality. |

| Resolution quality | Whether the customer’s real issue was solved. | Prevents false deflection. |

| Escalation accuracy | Whether the agent escalated at the right time. | Prevents frustration and risky automation. |

| CSAT after AI interaction | Customer satisfaction after agent involvement. | Measures experience, not just cost savings. |

| Hallucination rate | Incorrect or unsupported answers. | Critical for trust and compliance. |

| Cost per resolution | AI, tool, platform, and human cost per solved case. | Keeps automation economically honest. |

Intercom’s 2026 Customer Service Transformation Report says improving customer experience became the top priority for 2026, cited by 58% of teams, up from 28% the previous year. Intercom 2026 Customer Service Transformation Report That shift matters: teams are no longer asking only whether AI works, but whether it improves the support experience.

Security and Privacy Risks

Customer support agents often touch sensitive information: emails, addresses, order history, payments, billing issues, complaints, internal notes, and sometimes identity documents. AI agents must be designed with strict data boundaries.

- Do not expose internal-only notes to customers.

- Filter retrieval by customer, tenant, team, and role.

- Redact payment data, tokens, credentials, and unnecessary personal information.

- Require human approval for refunds, account deletion, legal responses, and compensation.

- Log all tool calls and sensitive actions.

- Test prompt injection against knowledge base documents and customer messages.

- Keep the AI agent from following instructions hidden inside retrieved documents.

The more tools an AI support agent can call, the more important permissions become. A read-only FAQ agent is one risk level. An agent that can refund customers, update account records, or send emails is a different risk level entirely.

Implementation Roadmap for SaaS Teams

Phase 1: Agent assist before full automation

Start with internal agent-assist features: conversation summaries, suggested replies, knowledge article recommendations, and ticket classification. This creates value while keeping humans in control.

Phase 2: Controlled self-service

Let AI resolve low-risk, well-documented issues such as password resets, status questions, setup guidance, and common troubleshooting flows.

Phase 3: Tool-connected support

Connect the agent to account systems, CRM, ticketing, billing, order management, or internal APIs. Add strong permission checks and observability before allowing actions.

Phase 4: Human-in-the-loop workflows

For medium-risk actions, let the AI prepare the response or action but ask a human to approve. This is ideal for refunds, customer complaints, billing disputes, and policy exceptions.

Phase 5: Continuous optimization

Review failed cases weekly. Improve the knowledge base, update prompts, add missing tools, refine escalation rules, and retrain evaluation sets.

Common Mistakes to Avoid

Mistake 1: Launching with a weak knowledge base

If the knowledge base is wrong, the AI will scale wrong answers. Fix documentation before blaming the model.

Mistake 2: Optimizing only for deflection

Deflection without resolution damages trust. Customers care about getting the right answer quickly, not whether a company avoided a human ticket.

Mistake 3: No clear escalation path

AI agents must know when they are not enough. Escalation should be fast, contextual, and visible to the customer.

Mistake 4: Giving the agent unsafe tools too early

Do not let an early support agent issue refunds, change subscriptions, or update sensitive records without approval and audit logs.

Mistake 5: No owner for AI performance

AI support systems need ongoing ownership. Intercom’s customer service planning guidance notes that when no one owns AI agent performance, feedback gets lost and improvements stall. Intercom AI performance ownership guidance

Final Takeaway

AI agents are changing customer support, but the winning teams will not be the ones that blindly automate the most tickets. They will be the teams that combine clean knowledge, safe tool access, human escalation, observability, and continuous improvement.

In 2026, customer support AI is becoming less about replacing humans and more about rebuilding the support operating model. AI handles repetitive work, humans handle judgment, and the architecture connects both into one customer experience.

Build AI Support Agents with Gadzooks Solutions

Gadzooks Solutions helps SaaS companies build production-ready AI support agents. We design knowledge-base retrieval, CRM integrations, ticket workflows, customer handoff logic, evaluation dashboards, guardrails, and human-in-the-loop support systems.

If your support team is drowning in repetitive tickets but you do not want to damage customer trust with a weak chatbot, we can help you build an AI support agent that is safe, useful, and measurable.

FAQ: AI Agents for Customer Support

Are AI agents better than chatbots for customer support?

Yes, when they are designed properly. AI agents can retrieve knowledge, use tools, inspect customer context, and escalate with summaries, while traditional chatbots usually follow predefined scripts.

Can AI support agents handle complex customer issues?

They can handle some complex issues when they have access to the right knowledge and tools, but high-risk, emotional, legal, billing, or ambiguous cases should still escalate to humans.

What should an AI support agent integrate with?

Common integrations include help centers, ticketing systems, CRM records, order management, billing tools, subscription systems, product telemetry, and internal runbooks.

How do you measure AI customer support success?

Measure resolution quality, automation rate, escalation accuracy, CSAT, time to resolution, hallucination rate, human override rate, and cost per resolution.

What is the safest way to launch AI support?

Start with agent assist, then automate low-risk questions, then add tools gradually with permissions, logs, and human approval for sensitive actions.

Sources

- Zendesk AI agents

- Zendesk CX Trends 2026

- Zendesk guide to AI customer service agents

- Intercom 2026 Customer Service Transformation Report

- Intercom Fin AI Agent automation rate

- Intercom AI performance ownership guidance

- Salesforce AI customer service agents

- Salesforce AI for customer service and support

- Gartner survey on AI pressure in customer service

- Gartner future of customer service agents

- OpenAI Agents SDK guide

- OpenAI support flow example