Cursor AI vs Claude Code is one of the most important comparisons for engineering teams adopting AI-assisted development in 2026. Both tools can help developers understand codebases, modify files, generate tests, and move faster. But they are not the same type of product. Cursor is an AI-first IDE built around code editing, inline suggestions, project rules, codebase indexing, and agent workflows. Claude Code is Anthropic’s agentic coding tool that can read a codebase, edit files, run commands, and integrate with a developer’s environment from the terminal, IDE, desktop app, and browser.

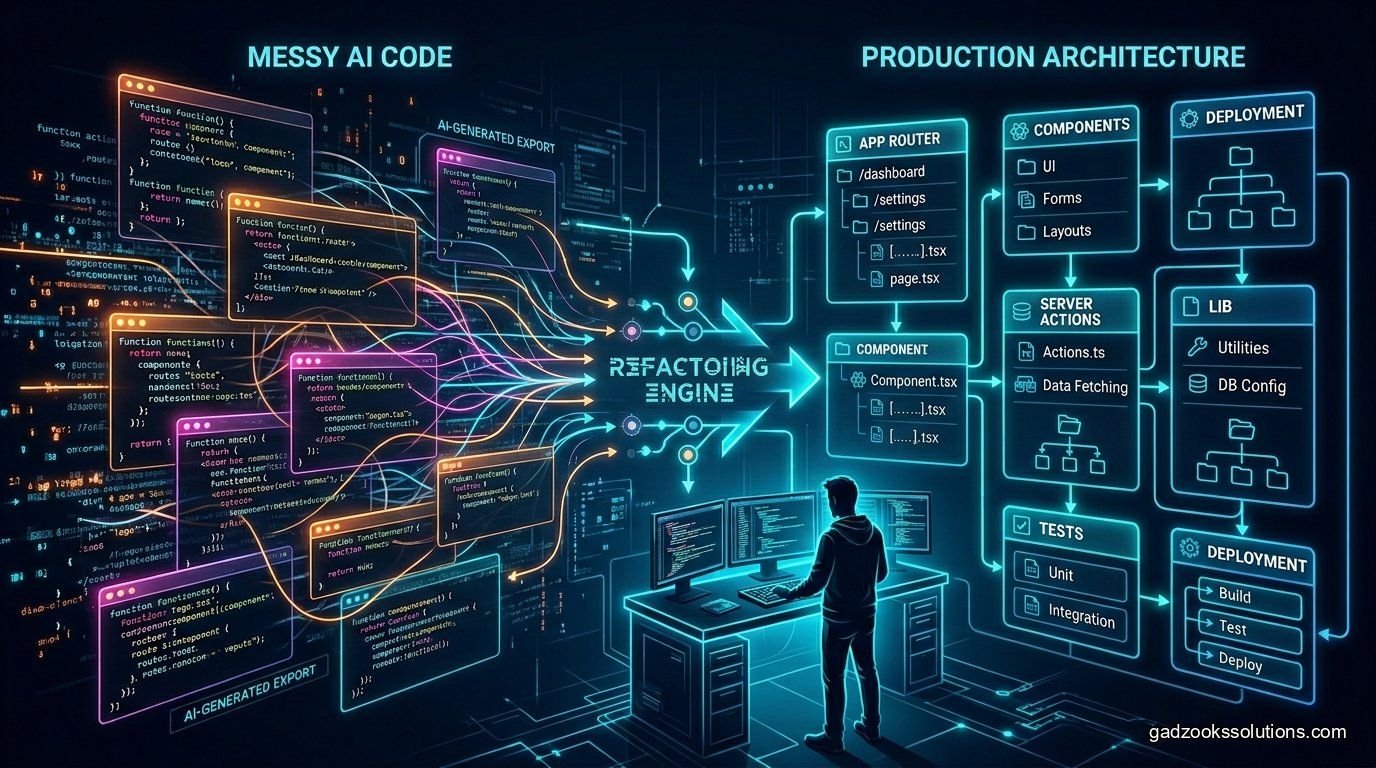

That difference matters most during production refactoring. Refactoring is not just “make this code cleaner.” In a real SaaS product, refactoring may touch authentication, database queries, state management, tests, deployment scripts, API contracts, and user-facing behavior. A bad AI-generated refactor can break production, introduce security bugs, or create inconsistent patterns across the codebase. The best tool is not the one that writes the most code. The best tool is the one that helps your team make controlled, reviewable, testable changes.

This guide compares Cursor AI and Claude Code from the perspective of founders, engineering leads, and developers who need to clean up a production codebase without turning AI assistance into uncontrolled “vibe coding.”

Quick Verdict

Use Cursor AI when you want an IDE-native workflow, fast code navigation, inline edits, rules, and tight feedback while actively reviewing changes file by file. Use Claude Code when you want a more autonomous agentic workflow that can inspect the repository, execute commands, modify multiple files, run tests, and help complete larger tasks through a terminal-driven process. For serious production refactoring, the strongest workflow is often both: Cursor for interactive review and day-to-day editing, Claude Code for planned multi-step refactors and test-driven implementation.

What Is Cursor AI?

Cursor is an AI-powered code editor built on a familiar IDE experience. Its strength is that it keeps developers inside the editor while giving the AI access to code context. Cursor’s documentation describes features such as project rules, user rules, team rules, codebase indexing, codebase understanding, and search tools that help the AI reason over a repository instead of answering from an isolated snippet.

For refactoring, Cursor is strong because developers can stay close to the code. You can highlight a function, ask for a change, reference related files, review a diff, accept only the parts you trust, and keep working. Cursor’s rules system is also useful for enforcing project-specific expectations: naming conventions, architectural boundaries, preferred libraries, testing style, accessibility rules, and security constraints.

In short, Cursor is best when the developer wants high control. It is not just a chatbot. It is an editor workflow that can make AI feel like part of the coding environment.

What Is Claude Code?

Claude Code is Anthropic’s agentic coding assistant. According to Anthropic’s documentation, it can read a codebase, edit files, run commands, and integrate with development tools. This makes Claude Code especially useful for larger tasks where the AI needs to inspect project structure, understand dependencies, make changes across multiple files, and verify work with commands or tests.

Claude Code’s advantage is workflow depth. Instead of asking for a single function change, you can ask it to investigate a bug, propose a plan, modify affected files, run the test suite, inspect failures, and iterate. That agentic loop can be powerful for production refactoring because refactors usually require more than one edit. They require investigation, planning, implementation, verification, and cleanup.

The tradeoff is that higher autonomy requires stronger guardrails. Developers should ask for a plan before code changes, keep commits small, review diffs carefully, and avoid letting any AI tool modify critical logic without tests.

Cursor AI vs Claude Code: Feature Comparison

| Category | Cursor AI | Claude Code | Best Choice |

|---|---|---|---|

| Primary workflow | IDE-native editing, inline changes, codebase chat, rules, agent tools. | Agentic coding across files, commands, tests, and development tools. | Cursor for interactive editing; Claude Code for autonomous task execution. |

| Production refactoring | Excellent when the developer wants tight control over diffs. | Excellent for planned multi-step refactors with test execution. | Use both for high-risk refactors. |

| Codebase context | Strong codebase indexing and semantic search features. | Strong repository-level understanding through agentic inspection. | Tie, depending on workflow preference. |

| Rules and standards | Project, team, and user rules are a major strength. | Can follow instructions and project docs, but workflow is more agent/task focused. | Cursor for persistent editor rules. |

| Running tests and commands | Useful inside editor workflows, depending on setup. | Core strength: can run commands and iterate through failures. | Claude Code. |

| Developer review | Very strong because edits are visible inside the IDE. | Strong if used with small tasks, Git diffs, and test gates. | Cursor for manual review comfort. |

| Best user | Developers who want an AI-first editor and precise control. | Developers who want an agent to handle larger coding tasks. | Depends on team maturity. |

When Cursor AI Is Better for Refactoring

Cursor is usually better when the refactor requires constant human judgment. For example, if you are cleaning up a React component hierarchy, renaming props, improving state management, or converting repeated UI blocks into reusable components, Cursor keeps the developer in the driver’s seat. You can work one file at a time, use inline edits, check the output visually, and quickly correct the AI when it misunderstands your design system.

Cursor is also strong for teams that want persistent coding standards. Its rules system can document how the AI should behave across the project. A team can create rules such as “use server actions only in these folders,” “never introduce a new state library without approval,” “write tests for service-layer changes,” or “follow our existing API response pattern.” This reduces random AI drift and helps keep generated code closer to your existing architecture.

Cursor also works well for refactors where you want to ask many small questions: “Where is this function used?” “Can this hook be simplified?” “Why is this component re-rendering?” “Which files depend on this type?” For day-to-day engineering productivity, that fast editor-level feedback is hard to beat.

When Claude Code Is Better for Refactoring

Claude Code is usually better when the refactor has a clear goal, multiple steps, and a testable outcome. For example, you might ask Claude Code to migrate an API client from one library to another, split a large service into smaller modules, add missing tests around a fragile payment flow, or investigate why a test suite fails after a dependency upgrade.

The key advantage is that Claude Code can operate more like a coding agent. It can inspect files, make changes, run commands, see test failures, and continue. That makes it useful for tasks where the developer wants the AI to do more of the mechanical work after the plan is approved.

Claude Code is also helpful for legacy codebases where the first step is discovery. You can ask it to map a module, identify risky dependencies, explain hidden coupling, or propose a staged migration plan before touching code. That planning phase is critical for production refactoring because the cost of a wrong change is often higher than the cost of a slow change.

The Safest Production Refactoring Workflow

Whether you choose Cursor, Claude Code, or both, the workflow matters more than the brand. AI coding tools should not be allowed to perform large uncontrolled rewrites in production systems. A safer workflow looks like this:

- Create a baseline: Make sure the current branch is clean and tests pass before AI edits begin.

- Define the goal: Write a clear task such as “extract billing validation into a service without changing API behavior.”

- Ask for a plan first: Require the AI to explain affected files, risks, and test strategy before editing.

- Limit the scope: Refactor one module, one feature, or one architectural boundary at a time.

- Use tests as gates: Unit, integration, and end-to-end tests should catch behavior changes.

- Review every diff: Do not accept AI changes just because they compile.

- Commit small: Smaller commits make rollback and code review easier.

- Run static checks: Use linting, type checking, dependency scanning, and security checks.

- Deploy carefully: Use preview environments, feature flags, or gradual rollout for risky changes.

Recommended Team Setup

For a startup or software agency, the most practical setup is not “Cursor or Claude Code.” It is a hybrid workflow. Use Cursor as the daily AI IDE for understanding code, editing components, writing small tests, and applying project rules. Use Claude Code for larger planned tasks where command execution, multi-file edits, and agentic iteration are valuable.

A healthy workflow could look like this: the engineer writes the refactor brief in a ticket, Claude Code investigates and proposes a plan, the engineer approves a narrow scope, Claude Code performs the initial implementation and runs tests, then the engineer reviews the diff in Cursor, tightens the code, adds missing tests, and opens a pull request. This gives you agent speed without losing engineering discipline.

Common Mistakes to Avoid

The biggest mistake is asking either tool to “refactor the whole app.” That prompt sounds efficient but usually creates chaos. Large rewrites hide bugs, make review harder, and often replace known imperfect code with unknown imperfect code. Another common mistake is relying on AI-generated tests that simply match the AI’s own implementation. Tests should validate user behavior and business rules, not just implementation details.

Teams should also avoid mixing refactoring with feature changes. If the goal is to clean architecture, do not add new product behavior in the same pull request. If the goal is to add a feature, do not rewrite the module unless the rewrite is necessary. AI makes it tempting to change more than needed. Production engineering requires the opposite: small, intentional changes.

Production Refactoring Checklist

- The AI has read the relevant files, not guessed from a short prompt.

- The task has a written plan and rollback strategy.

- The change is small enough for a human to review properly.

- Tests cover the behavior being preserved.

- Type checking, linting, and security checks pass.

- No secrets, environment variables, or production credentials were exposed to prompts.

- The final pull request explains what changed and what did not change.

Final Verdict: Cursor AI or Claude Code?

Choose Cursor AI if your priority is IDE-native control, fast code navigation, inline review, and persistent project rules. Choose Claude Code if your priority is agentic execution, command running, multi-file implementation, and test-driven iteration. For production refactoring, the best answer is often to use both: Claude Code to plan and execute scoped changes, Cursor to review, refine, and maintain developer control.

The winner is not the tool that writes code fastest. The winner is the workflow that lets your team ship safer improvements with less technical debt. AI can accelerate refactoring, but it cannot replace architecture judgment, test discipline, security review, and careful deployment.

Need Help Refactoring an AI-Generated Codebase?

Gadzooks Solutions helps startups turn AI-generated prototypes into production-ready SaaS applications. We audit messy codebases, clean architecture, connect backends, improve test coverage, and build safer AI-assisted development workflows.

Frequently Asked Questions

Is Claude Code better than Cursor for refactoring?

Claude Code can be better for larger agentic refactoring tasks because it can inspect files, edit code, run commands, and iterate through test failures. Cursor is better when you want tight IDE-level review and more direct control over every edit.

Is Cursor AI enough for production development?

Yes, Cursor can be used in production workflows when paired with strong rules, code review, tests, type checking, and disciplined pull requests. The risk comes from accepting AI changes without review, not from using Cursor itself.

Can I use Cursor and Claude Code together?

Yes. Many teams can benefit from using Claude Code for planned multi-step tasks and Cursor for reviewing, refining, and editing the final code inside the IDE.

Which tool is better for beginners?

Cursor is usually easier for beginners because it feels like a normal IDE with AI added. Claude Code may be more powerful for advanced workflows, but it requires more discipline around planning, command execution, and reviewing agent changes.

Should AI tools rewrite my whole codebase?

No. Large uncontrolled rewrites are risky. Use small scoped refactors, review every diff, run tests, and avoid combining refactoring with unrelated feature changes.